by Jimmy Alfonso Licon

1. Introduction

At the center of Severance—an Apple TV show that has garnered high acclaim—is a simple yet unsettling idea that a person’s mental life can be cleanly partitioned, creating distinct selves who share a body but not a memory. In the Severance fictional universe, one self—the outie—lives an ordinary life outside of the work environment, while the other self—the innie—exists only at work, mostly confined to a narrow environment and deprived of autobiographical context outside of that circumscribed band of life. Each self is conscious with the capacity for a full range of emotions, the ability to deliberate, with preferences and reactions to reasons and a long-term memory of the history of that self (barring outside interference). And yet, each is largely ignorant of the comings and goings of the other selves with whom they share a body.

The moral unease this generates is immediate and one of the striking features of the show. And one of the questions that kept occurring to me, as a moral philosopher, is whether an outie does something wrong by authorizing the procedure—whereby other selves are created via brain partitioning, but where the innie bears most of the cost – has wronged themselves, or someone else? If the innie resists, but the outie insists, whose agency governs, and how bears the wrongs that such severing can inflict? Can consent survive such fragmentation, or does it collapse once the brain is severed into multi-portioned selves?

For some important background: while Severance is fictional, the structure of the problem is not. Philosophers and neuroscientists have grappled over the last few decades, if not centuries, with real cases of cognitive division—most famously in split-brain patients whose corpus callosum was severed to treat intractable epilepsy. Thomas Nagel’s discussion of these cases remains especially instructive. When the hemispheres are disconnected, behavior often fragments in ways that resist tidy metaphysical interpretation. One hand reaches for an object while the speaking hemisphere denies seeing anything at all. A subject offers confident explanations for actions whose true causes are inaccessible to them. The result appears to be partial duplication of the self and mental life in such cases, with centers of perception, intention, and response that coexist within a single organism, sometimes cooperating and sometimes competing.

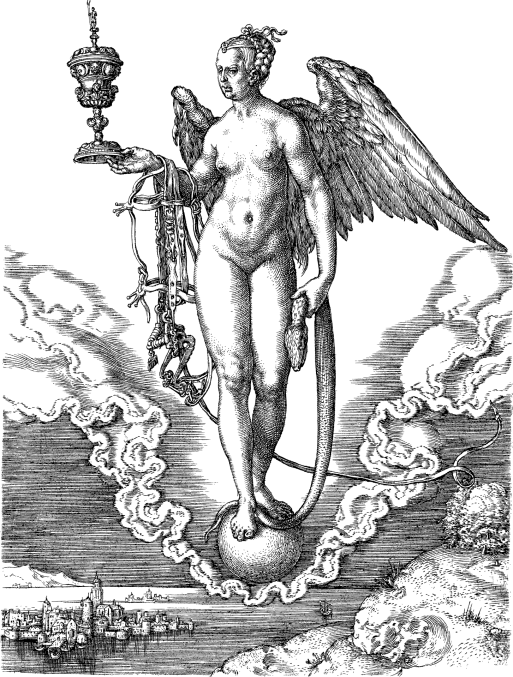

Nagel’s fundament worry in his 1971 article is that our ordinary picture of persons—as unified subjects of experience and action—fits uneasily with a physical story that allows for selves to apparently duplicate and dissociate, like a neurological version of Walt Whitman’s famous quip in the poem Song of Myself that he ‘contained multitudes.’ If the unity of consciousness and self fractures, then how should we think about agency, responsibility, and identity across time. These philosophical questions, taken on their own are complicated enough, but are only made more so by such cases.

And that is likely a key reason why Severance has such appeal: it takes that tension and makes it an organizing principle of a social world. It asks what happens when fragmentation is not accidental or pathological, but intentional, institutionalized, and incentivized. And it presses a further question: once unity is gone, what ethical resources remain?

#

2. The Principal–Agent Problem on the Inside

One helpful way to frame the moral structure of severance is through the lens of the principal–agent problem. In economics, this problem arises when one party (the agent) is empowered to act on behalf of another (the principal), but their incentives diverge. Think of the investment banker who makes decisions that enrich herself but hurt her client, or the lawyer who gives bad legal advice because he was forced onto the case and would rather see his client convicted, or the construction company that charges full price but cuts corners on material quality and safety to save a few bucks. The agent’s decisions affect the principal’s welfare, yet the agent may benefit from acting otherwise. Much of professional ethics—from fiduciary law to medical consent—exists to manage precisely this kind of misalignment between incentives.

What Severance dramatizes is a principal–agent problem within a single person. The outie decides whether to undergo severance, whether to continue employment, and under what conditions the innie will live. The innie, meanwhile, experiences the consequences of those decisions but has no meaningful voice in shaping them. The incentives are starkly asymmetric, and while the outie enjoys the paycheck, the career benefits, and the psychological insulation, the innie is stuck bearing the confinement, monotony, and loss of autonomy.

Take one of the main characters as an example. (Spoilers ahead). Helly R’s storyline makes this asymmetry impossible to ignore. Her innie’s repeated attempts to escape—even to the point of self-harm, to the point of attempted suicide—are met not with reconsideration of the difference in power and decision-making between her and her outie, but instead with reinforcement. Her outie refuses to withdraw consent, despite overwhelming evidence that the innie experiences her existence as intolerable and does not consent to her plight. What makes this particularly disturbing is that one of the selves bears most of the costs, while the other enjoys the benefits and autonomy.

The problem here is, to a large degree, the incentive divergence between the different selves and the autonomy asymmetry. The innie cannot meaningfully protest except for extreme instances like threatening suicide in a labor market crunch or other dramatic pushback only after the innies discover the situation that they are in. And, not only that, but also that most outies, with a few exceptions, do not understand what they are authorizing. This situation makes clashes between the principal (the innie) and the agent (the outie) inevitable.

#

3. Self-Deception as a Design Feature

This moral vulnerability is compounded by the role of self-deception, a psychological feature that is ubiquitous among humans but is only exacerbated by the principal-agent problem. Outies are both ignorant of their innies’ experiences and systematically encouraged to remain ignorant. The procedure of severing the brain into discrete selves with distinctive and exclusive memory systems acts as an epistemic and moral buffer, shielding the outie from guilt. This aligns with what we know about motivated ignorance. People often avoid information because it threatens their self-conception. Learning what one’s choices actually cost can be destabilizing to one’s sense of moral identity and ability to convince others of their benevolence and trustworthiness. Severance, both the show and the procedure in the show, exploits this tendency by design.

Here Severance pushes a familiar phenomenon to its logical extreme. Ordinary self-deception involves reinterpretation, selective attention, and rationalization. Severance replaces these soft mechanisms with a hard cognitive barrier. The result is a self that can sincerely affirm moral commitments while acting in ways that systematically violate them because the evidence of violation is located elsewhere. And, moreover, Nagel’s split-brain cases offer a useful parallel. When subjects confabulate reasons for actions initiated by the non-speaking hemisphere, they are making up a sincere, but false story that fills in the explanatory gaps about one’s actions with the best evidence they have. Outies construct narratives about their work lives untethered from reality that their innies inhabit to make coping and thriving easier.

#

4. The Golden Rule Under Conditions of Similarity

If severance undercuts the usual means of moral coordination, what remains? One contender is the Golden Rule, namely treat others as you would want to be treated. At first glance, this may seem too thin or too familiar to bear much philosophical weight. But in the context of severance, it acquires a distinctive justification due to the many similarities between outies and innies that share a brain and a body. The Golden Rule functions as a heuristic for moral actions that asks an agent to model the experience of another and to better morally inform their actions.

Philosophers of mind have long debated how we understand others, whether by inference to theory or by imaginative simulation. This is precisely what makes the rule apt in cases of severance because the innie and outie are biologically continuous, psychologically overlapping, and temperamentally aligned. If there is any case in which one is well-placed to apply the Golden Rule, it is cases of extreme similarity. And because of those many similarities, classic objections to the Golden Rule—sadomasochists, idiosyncratic preferences, radical divergence—lose much of their force here. The problem here, then, is that outies typically lack the incentives (and to some extent lack the knowledge) to apply the Golden Rule to their innies for the reasons we have been exploring.

#

5. Reputations with Me, Myself, and I

There is also a more pragmatic route to the same conclusion that is grounded more in incentives rather than idealized moral motivation. Even in the absence of direct communication, innies infer their outies’ priorities from the structure of their environment such as the quality of food, the level of autonomy, the tone of managerial interaction. Here the logic of reputation reasserts itself in an unexpected place. Ordinarily, reputations matter because they affect how others choose to interact with us. In Severance, the “other” is oneself under conditions of amnesia. Yet an innie who experiences their outie as indifferent or exploitative has little reason to cooperate. Resistance, sabotage, and withdrawal become predictable responses as we see with several of the characters, to varying degrees, throughout the show.

From this perspective, treating the innie well is both altruistic and instrumentally rational. A content innie is more likely to perform well, to comply with directives, and to avoid disruptive behavior. The outie’s actions thus constitute a form of signaling across a cognitive barrier by using costs and hard-to-fake signals to convey whether the innie is regarded as a partner or a disposable resource. This mirrors broader insights from signaling theory. Costly, hard-to-fake actions convey information about underlying intentions. In Severance, providing humane working conditions is costly and signals respect. And like most reputational mechanisms, it works even when motives are mixed.

We already accept that we owe duties to our temporally distant selves—to save for retirement, to avoid foreseeable harm, to preserve future options. Severance intensifies this familiar problem by turning temporal distance into cognitive distance. The innie is a future self who never remembers being past. Governing such a relationship requires principles that can operate without memory or reciprocity. Here the Golden Rule and reputational incentives converge. Both offer ways to stabilize cooperation across fragmentation. Neither requires moral purity, but instead function under conditions of limited information and mixed motives. And both are compatible with a sober view of human psychology.

#

6. Is Severance Always Wrong?

Given these concerns, it is tempting to conclude that severance is always morally impermissible. Kantian objections about treating persons as mere means loom large. Consequentialist worries about asymmetric suffering are hard to dismiss. Theological concerns about the integrity of the soul add further weight. And yet, Severance itself complicates this verdict. Not all severed lives are depicted as clearly wrong or abusive. Burt’s severance resembles a form of spiritual discipline with his choice to intentionally divide his self that one could be oriented toward divine devotion echoing mystical traditions like Meister Eckhart’s conception of an inward-facing soul oriented toward God.

Other cases are imaginable like a national security operative might choose severance to prevent coercive extraction of information. Here the innie’s ignorance would serve a protective function, and both selves might endorse the arrangement under reflection. What distinguishes these cases is not the technology itself, but the structure of consent, respect, and role-recognition. The moral fault line, then, is between treating the innie as a moral stakeholder versus as a tool.

Nagel worried that our attempts to integrate mental life with physical explanation would likely encounter principled limits like the unity of consciousness. Severance explores what happens when those limits are crossed in practice. If selves can be divided, whether due to corrective surgery or labor market necessity, then the ethics of self and self-care take on a whole new significance. If agency fragments, responsibility must be distributed rather than denied under the right incentive structures.

Severance asks us to confront both the (potential) future of work and deeply thinking about what we morally owe to ourselves, whether in the future or in a severed part of the brain. In such a world, fidelity to principles that survive forgetfulness and to selves, like those in our future, we cannot see but nonetheless have power over, becomes increasingly important.

~

Bio:

Jimmy Alfonso Licon is a philosophy professor at Arizona State University, where he teaches courses in epistemology, ethics, and philosophy of law. His research and public writing focus on how incentives and information shape moral and intellectual life in venues like AIER, Mises Wire, The Pamphlet, and many other places. He has also taught at Georgetown, University of Maryland, and Towson University.